Can LLMs Innovate?

On First-Principle Thinking, Analogical Reasoning, and the 'Water' of Personal Experience

Hi friends,

Some quick life updates:

Halfway through my time at Stanford!!

Joined a popup city up in Healdsburg California for a month, and am currently resident at another (vertical) popup in SF for the summer

Started an internship at a legaltech startup in SF to work on reasoning x product

Building a ‘deep brainstorm’ tool!

Last quarter, I tried to force LLMs to be more ‘innovative’ as a fun class project. The core premise is this: the way that LLMs work (next-token prediction) means that its output will always be what’s statistically probable, while the deepest forms of innovation, by definition, will have no statistical precedents. If we are to build a future where AI takes on the roles of scientists, strategists, inventors - if we are to have agentic systems that not just execute on the “future work” section of research papers but to pioneer entirely new fields - then the question we ought to be asking is:

What, fundamentally, does it mean to ‘innovate’?

What is innovation? Are there different types of innovation? Is it different from ‘creativity’? And how do humans innovate? The term ‘innovation’ is so loaded that it’s often difficult to point to something and say “this is the definition of innovation”. But heck, we thrive on ambiguity, so let’s try to unpack this bit by bit. All these that I’m writing here are what’s been fermenting in my head for quite a while, and it all started with a simple thought experiment:

Suppose we’re living in the world before the introduction of behavioural economics as a distinct discipline.

Could an LLM, trained on diverse text corpora that doesn’t contain any explicit links made between the two fields, ever independently ‘connect the dots’ to propose ‘behavioural economics’?

The thought experiment is no doubt flawed, since behavioural economics wasn’t just aha!-ed into existence by a random dude who connected the fields after his breakfast cereal on a gloomy Tuesday. Some innovations may inch forward, some may be conceptual leaps, or maybe all ‘conceptual leaps’ are simply just incremental progress piling up to eventually pass a critical threshold in disguise. But insofar as we agree that innovation is a process of “connecting previously unconnected dots”, what I’m essentially curious about is whether LLMs can connect dots in a way that produces radically useful solutions to a given problem. It does, after all, have a LOT of dots to work with.

Note that I’m not arguing that LLMs should be the one doing all the innovating - I believe, as I will argue in the latter half of this piece, that we humans have a unique role to play. But the question of whether LLMs can innovate is fascinating nonetheless.

Let’s dive in.

Horses, Automobiles, Protein Folding

Some thoughts:

Innovation is creativity applied to problem-solving

A creative output is one that’s both novel AND exemplary (not necessarily applied to problem-solving)

Perceived magnitude of innovation is proportional to perceived complexity of problem

The question of whether LLMs can ‘innovate’ is essentially one about whether it can reason like a highly-creative human to solve a highly-complex problem

Harvard Business Review proposed that there exists 4 types of innovation: incremental, disruptive, architectural, and radical. Similarly, another framework (somewhere) classified innovation along the axis of its application domain: product, process, business, etc. But I guess none of us are here reading this because we wanted to discuss business transformation, and it also doesn’t make sense for the categorization of innovation to be detached from the problem if it’s so intimately tied to the concept of problem-solving. So let me propose the following framework instead:

“Open-World” vs “Close-World” Innovation.

Open-world innovations are creative problem-solving for ‘open-world’ problems. Close-world innovations are creative problem-solving for ‘close-world’ problems. Close-world problems are problems that are well-defined. Open-world problems are problems that are ill-defined. But both involve problems that are complex as hell - that’s why a solution here would even qualify as an ‘innovation’ in the first place.

What’s the difference between a well-defined and an ill-defined problem? It concerns whether the ‘innovation’ is more so 1) a function of solving a problem or 2) that of defining what the problem we ought to be solving actually is in the first place.

Let me explain.

Close-World Innovation: Complex, Well-Defined Problems

Deepmind’s AlphaFold is a classic example of ‘close-world’ innovation’. The problem researchers are trying to tackle - the ‘protein-folding problem’ - is well-defined: “given a sequence of amino acids, what is the 3D structure the protein will naturally adopt?” But just because it’s well defined doesn’t mean it’s not complex: even a small protein could theoretically yield a mind-boggling number of possible configurations, and it’d “take longer than the age of the known universe to enumerate all possible configurations of a typical protein by brute force calculation”. The ‘innovation’, in this case, essentially comes from the introduction of a new method (deep learning algorithm used in a clever way) that effectively handled the problem’s inherent complexity.

In other words, the problem is [X]. We couldn’t solve [X] till now because it’s too complex. We’ve finally found a way to solve [X] using a new method [Y]. We’ve achieved a breakthrough innovation.

Open-World Innovation: Complex, Ill-Defined Problem

Now consider the invention of the automobile. If we were 2 normie cofounders in the 19th century doing an AI B2B SaaS transportation and logistics startup, the question we’d likely be (consciously or subconsciously) trying to answer would be: “how do we make the horse go faster?”. This means we’d be thinking along the lines of: better horse feed, lighter horse carriages, annual subscription membership for Anytime (horse) Fitness, etc. It also means that we’d never be the startup that revolutionalized the whole industry, although we could arguably still raise a pretty huge seed round from that one VC with the thesis that the future of transportation is jacked.

The automobile was invented because the creator asked a fundamentally different question. Instead of “how do we make the horse go faster?”, they asked: “how do we get from point A to point B faster?”. While most folks at the time would have assumed that the horse is necessary for speedy travels, the invention of the automobile started with questioning this assumption to understand what the problem is fundamentally about. Only by removing the horse from the equation were we able to even begin to imagine what else is possible.

But this is confusing: isn’t the question of “how do we make the horse go faster” a much more ‘well-defined’ problem than “how do we get from point A to point B faster?” Technically yes, but it’d be useful to zoom out here. Once we look at the entire problem space as a whole, we’ll see it’s precisely this very fact that we can come up with different questions to ask and different problem-framings to adopt and still be ‘correct’ (I mean, I’d assume that a jacked horse does help us move faster) that makes the problem an open-world, ill-defined one.

If there can be multiple ways to look at a problem, then what ought to constitute the deepest form of innovation is finding the specific way that provides the maximum leverage. What we’re essentially doing when we begin viewing the problem of transportation as that of “how to get from point A to point B faster” is taking a step back from the current well-defined problem (how do we get the horse to move faster?) to have a shot at the framing of maximum leverage. We’re trying to arrive at a different well-defined problem to work on.

Whether this redefinition - or reframing - of the problem is actually the ‘right’ one depends on whether we’ve taken the step backwards properly. What we need, in a sense, is to get to the deepest level of the problem and see what the problem is really about.

We want to start from the skeleton of the problem.

Seeing Bones, Matching Skeletons

First Principle Thinking

First principle thinking is all about diving deep - to go beneath the skin and the muscles and the fats and see the bones themselves. Effective problem reframing, then, can be thought of as a the process of going a layer deeper from seeing the musculature to actually seeing the bone structures. Often lauded as the main ingredient of their success by the most prolific innovators of our time like Elon Musk, the idea here is that breaking an especially-complex problem down to their most basic elements first is often the best way to understand how to solve the problem. The more complex the problem, the more important this becomes. And in the case of ‘open-world’ problems, it’s ultimately the process we could adopt to get to the best way to frame the problem.

Sometimes, it’s also what we could adopt to introduce a paradigm shift in the way we do things IF the reason we’ve been doing things a certain way thus far is because we assumed that it’s the only way we could - that “it’s just how things are done”.

A case in point is Elon Musk. As one of the most ardent advocate of first-principle thinking alive today, Musk’s journey to founding SpaceX started with a simple question: why can’t rockets be reusable? Most folks at NASA - and everyone else by extension - had accepted single-use rocket as the norm, but what Musk realised was that most of the answers he’s been getting to the question were not actually grounded in immutable physical laws but in path-dependent assumptions: that reusability was “too risky”, “too costly to test”, “too unproven”, or “just isn’t possible”. Ultimately, what he saw was that the materials themselves only accounted for a small fraction of the rocket’s price - thereby suggesting that reusability is not, after all, a theoretical infeasibility. The process of getting to this realisation that there is nothing fundamentally blocking him from reusing rockets is the process of surfacing and questioning assumption to understand what the problem is actually about. That’s precisely what first-principle thinking is.

But there are 2 caveats here (and 1 more which I’ll leave for later in the piece). First, we need to be able to know whether and when we have truly arrived at the ‘bones’ of the problem (and not, say, the tendons of assumptions). And second, what we have discussed so far is how we could reason TO first principles — we also need to understand how we could effectively reason FROM first principles to arrive at a breakthrough innovation.

Straight Down to the Bones

Reasoning to first principles requires not just asking questions but asking the right ones. After all, what we ideally want is to know that we are indeed diving deeper and deeper beneath the surface of the skin to reach the bones and not simply, say, bouncing up and down between layers upon layers of subcutaneous fats. There are 2 main ways to do so effectively: 1) the “5-Why’s” approach, and 2) Socratic Questioning.

5-Whys involve asking ‘whys’ 5 consecutive times with the objective of diving down to the root causes of a problem. Here’s an example of using the 5-Whys approach in thinking about the topic of this piece:

For the question “Can LLMs Innovate?”:

1st Why: Why do we believe that LLMs can’t innovate?

Ans: Because LLMs generate statistical probable sequences based on their inputs, while true innovation requires originality beyond what is already known

2nd Why: Why does true innovation require originality beyond what is already known?

Ans: Because ‘true’ innovations are concerned with the 0→1 leap, and not the incremental improvements from 1→100

3rd Why: Why can’t ‘true innovation’ apply at the 1 → 100 stage?

Ans: It depends on our definition of innovation, but most examples of innovations that came to mind (AlphaFold, Steam Engine, LLM architecture, Airbnb/Uber) typically involve a ‘breakthrough’ - hence the 0 → 1 leap.

4th Why: What is the definition of innovation? (Technically a ‘what’ but it achieves the same goal)

Ans: There’s no consensus on a single definition of innovation - some defines it according to the outcome (incremental improvements from 1 → 100 are also considered as ‘innovations’ in this framework), while others defines it according to application spaces like ‘product’, ‘business’, or ‘process’.

5th Why: What are the common threads underlying all these definitions?

Ans: The common underlying threads are: 1) emphasis on ‘novelty’, 2) ‘impact’, and 3) problem-solving

6th Why: If innovation is fundamentally about 'problem-solving, what types of problems are there, and when would it constitutes innovation?

…

Note how this process is effectively helping to clarify my thinking through highlighting to me that I need to consider what exactly is innovation first, which led to my earlier formulation of the different innovation types based on the types of problems they’re solving. Also note how this process is linear in nature - I could have very well went down the path of clarifying my thinking around LLM architecture instead at the 2nd Why.

The linear nature of the 5-Whys approach is a fundamental limitation in isolation because we might get caught up with one aspect of a multifaceted problem without fully grappling with the others. Socratic questioning, in contrast, is a method that aims to cover a broad range of angles from the get go. It involves 6 steps:

Clarify Definitions: “What do we mean by ‘innovate’?”

Uncover Assumptions: “Is the assumption that statistical prediction precludes original insight logically sound?”

Explore Evidence: “When have LLMs produced something truly novel and useful? Might we consider these ‘innovation’?”

Search Alternatives: “Could alternative architectures or approaches enable LLMs to ‘think innovatively’?”

Check Implications: “If LLMs could innovate, what does that mean for the way we, say, currently ‘do science’?”

Question the Question: “Do we really want LLMs to be able to independently ‘innovate’ in the first place?”

Reasoning to first principles is done best when we adopt socratic questioning for the initial breadth and the '5-whys’ for subsequent depth for each thread of inquiry. In this context, the question of whether LLMs can innovate ultimately becomes one of whether it can reason like a highly-creative human to solve a highly-complex problem, which was what led me to the concept of first-principle thinking in the first place.

But apart from first-principle thinking, there’s another way of reasoning that some highly creative humans have adopted to arrive at breakthrough solutions for certain highly-complex problems. That way of reasoning is reasoning by analogy.

Reasoning by (Deep) Analogy

Solar power is the dominant form of energy generation for long-term space operations, and engineers face a unique challenge: how does one deploy large, compact, and lightweight solar arrays reliably into space? Sending stuff up to space is expensive, and there’s a severe constraint in storage volume for the carrier. Facing these multitude of constraints, typical solutions involving high mechanical complexity wouldn’t work due to the high risk of failure. The solution, ultimately, came from an unlikely source:

Origami.

What the engineers realised was that fundamentally, the problem they’re facing is about how to translate between 2D materials and 3D forms. At a more technical level, they realised that they could incorporate complaint mechanisms - a flexible mechanism in mechanical engineering that “achieves force and motion transmission through elastic body deformation” which bypasses the needs for rigid body joints. But here’s the interesting part: the engineers noticed that instead of designing an entirely new complaint mechanism for the solar arrays from scratch, they could actually draw insights from the ancient Japanese art of origami as an established precedent. After all, it is also fundamentally about translating between 2D materials 3D forms (just that, in this case, we’re eventually planning to unfold the 3D form).

Drawing insights from a field as ancient as origami has allowed the engineers to arrive at ways to contain as much surface area of the solar panels in as compact a size possible with minimal mechanistic complexity. This process of looking for successful analogues to inform our own solution space is the process of reasoning by analogy.

Elon Musk has always bashed reasoning by analogy as a method inferior to reasoning by first principle, yet what he’s actually bashing is not reasoning by analogy done right, but reasoning by precedents. His argument is that we need first principle thinking because people will never arrive at the deepest breakthroughs if all they’re doing is referencing what has worked elsewhere or in the past. My argument, instead, is that reasoning by analogy when done right can be immensely powerful in informing potential solution spaces. Doing so requires reasoning TO first principles as a pre-requisite. We’re essentially saying that hey, now that we’ve distilled the problem into its fundamental first principles, why not see if there are other domains that have the same first principles, and see if we could borrow anything instead of starting from scratch ourselves? It’s Reasoning-FROM-First-Principles-as-a-Service, in a sense.

But how can we ever be sure that we’re doing it right? Yes, we need to match problem domains based on first principles instead of superficial similarity, but it’s slightly more nuanced than that: we also need to consider the difference a bone and a skeleton.

Structural Mapping Theory

First principles are bones, but when it comes to highly-complex problems, what we need to be matching are different domain’s skeletal structures. But first, we need to understand the theory of analogical reasoning: Structural Mapping Theory.

Developed by Dedre Gentner in 1983, Structural Mapping Theory (SMT) was a groundbreaking improvement upon previous theories of analogy by distinguishing analogy from literal similarity. Consider the following 2 statements:

The X12 star system in the Andromeda nebula is like the solar system

The hydrogen atom is like the solar system

The first is an example of literal similarity mapping, while the second is an example of an analogy. Intuitively, we know that “the hydrogen atom is like the solar system” is an analogy because just like how the electrons revolve around the nucleus, so too does the planets revolve around the sun. What makes this comparison an analogy is that unlike the comparison between the X12 star system and the solar system, what’s retained is ONLY the relational properties (“A revolves around B”) and NOT the attributes (both X12 and solar systems have planets). In SMT-terms, the hydrogen atom is referred to as the ‘target domain’, while the solar system is the ‘base domain’ - the domain from which we draw parallel to apply to the target domain.

Note how this sounds familiar to first-principle thinking? What we essentially did was to abstract away all that’s not fundamental to find that oh look, the atom is actually just some small floating things revolving around a large blob in the centre! (Ok this is simplistic) The analogy, then, comes in realising that actually the solar system is also something that has small floating things revolving around a large blob in the centre :o.

Drawing parallel from the base domain to arrive at a breakthrough innovation in the target domain goes a step further from pure analogy in that it’s analogy applied in problem-solving. We want to know what the way the base domain solved the problem might suggest for how we could approach ours. But how effective this is depends on how good the analogy is. And this is where the difference between the bone and the skeleton comes in.

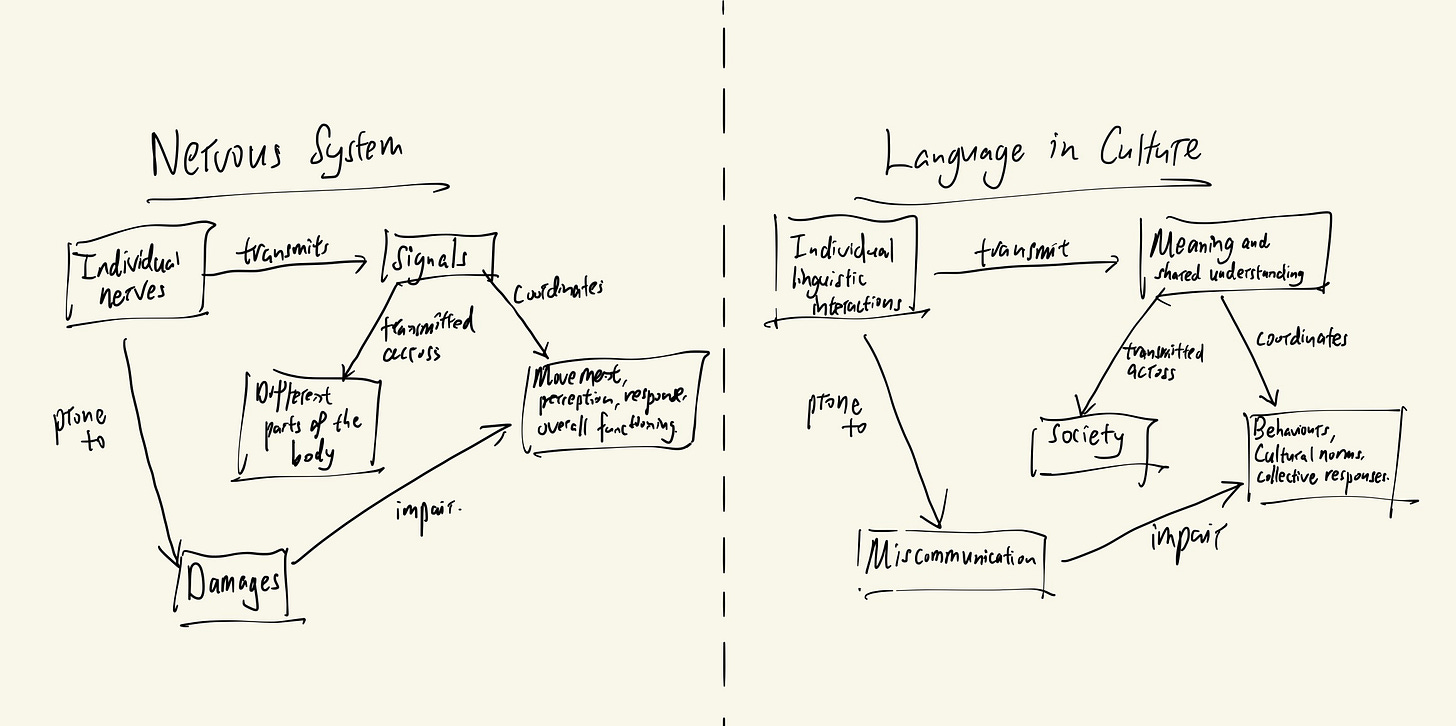

“Language is the Nervous System of Culture”

The above is an analogy that came up in my conversation with Susan, a friend I met at Edge Esmeralda (a popup city). It just feels like an analogy that hits different - why’s that?

Analogies like this invite us to consider a different way of viewing a certain object, domain, topic. It encompasses a certain insight that someone else has distilled about the target domain - and what is insight other than the connecting of previously-unconnected dots that, in retrospect, makes so much sense? This analogy is (in my opinion), a great analogy, and I’ve been musing about what is it about the analogy that made it so great. The answer I arrived at boils down to what Dedre Gentner calls the Systematicity Principle of Structural Mapping.

The idea is this: analogies are powerful not merely because two things share isolated features, but because they share interconnected relational structures.

Think of it in terms of bones and skeletons. In isolation, the bones of first principles wouldn’t tell us much about the organism that is the complex problem; what we ideally want to know is that judging from how the spine is connected to base of the skull instead of its back, the organism we’re looking at likely has an upright posture.

The skeletal structure of the analogy of “Language is the nervous system of culture” probably looks something like this:

This structural mapping is characteristic of an analogy as both the target and the base domains have the same edges (relational properties) but different nodes (attributes). Likewise, it’s considered a good analogy because the mapping satisfies the systematicity principle well: the nodes can all be connected in an interdependent way to give a similar macro-level structure.

Taken together, to have a best shot at innovation for any given complex problem, we need to:

Reason TO first principles (individual graph tuples - bones)

Identify interrelationships between first principles (graph structure - skeletons)

Reason FROM first principle structure to arrive at potential solution (optional: reference base domains if similar fundamental structures exist)

But are there edge cases to this?

Rainbow Squiggly Skeletons

A recent saying I really liked is the idea that “every person is an edge case”. It reminded me of one of the most famous commencement speeches of all times given by the (now deceased) writer David Foster Wallace, which started like this:

There are these two young fish swimming along and they happen to meet an older fish swimming the other way, who nods at them and says,

‘Morning, boys. How’s the water?’

And the two young fish swim on for a bit, and then eventually one of them looks over at the other and goes,

‘What the hell is water?’

It’s quite profound really. Think about it: what’s our ‘water’? What’s that which is invisible to us, yet permeates every aspect of our lives since the moment we’re born? That which, instead of shaping our perception of reality, is our reality?

One interpretation is that the water is our culture. Another interpretation is that the water is our experience.

Specifically, our individual experience going through life. That which is a function of our culture, gender, childhood, family, everything.

A follow-up thought experiment here is to consider what, if we are the fish, we need to do for us to ‘see’ the water we’re swimming in, but that’s the topic for another day and another Substack. What really matters for this piece is this question:

If everyone is an edge case shaped by their waters of personal experience, are there really any ‘first principles’ of human behaviour?

What if, when it comes to the ‘first principles’ of human behaviour, we’re not dealing with just any normal skeletons but rainbow, squiggly ones?

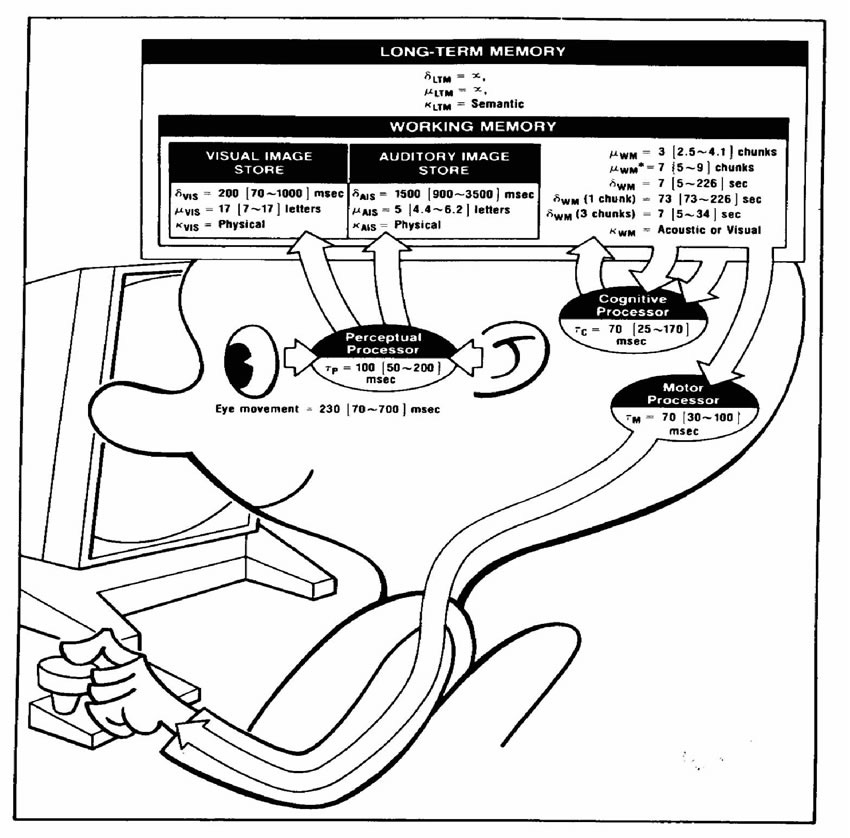

The entire fields of human-computer interaction and human-factor engineering are arguably endeavours in distilling the first principle of humans: HCI pushed for the ‘Model Human Processor’ for cognition, while human-factor engineering sought after the ‘model physical forms’ of ‘Joe’ and ‘Josephine’. Yet on the other hand, anthropology is all about acknowledging and studying the ‘thick description’ of human behaviour - the messy complexity of lived human experience in all its glorious totality. Behaviour as understood only when viewed together with the ‘water’ within which it’s observed.

There’s fine, fuzzy line between reductionism and effective first-principle distillation. I’m currently not sure about where’s the boundary. Might we be able to distill a problem to its first-principle while still retaining sufficient contextual nuance? If it’s still reasonable to say that one such first-principle of human motivation is, for example, the need to belong, at what point does it fail to be reasonable any longer?

Some examples of contextual nuances:

Suitcases are often bought in India not for travelling, but for weddings (for the bride to use when moving to the groom’s family)

Ads work well in Asian societies (and subscription services flounder) because many people would go out of their ways to save pennies. This is likely because we have less of a sense of the unit value of our time, since our paycheck is paid out per month rather than per hour. (Take this argument I heard from a podcast with a grain of salt. While it ‘makes sense’, it falls under the same category as arguments in evolutionary psychology in that it’s unfalsifiable)

What women need to feel safe is not a button to press to immediately call 911 in the case of a sexual assault, but an excuse to get out of the situation. This is because more than 80% of sexual assaults occurs not with strangers but with people these women already know. Calling the police on someone they know is something few would instinctively do due to the confusion in the moment.

This holds strong implications for our discussion of whether LLMs can truly innovate: if 1) the complex problem involves humans to a certain degree (instead of, say, Elon Musk’s rockets), 2) every human is an edge case (with rainbow squiggly skeletons), and 3) LLMs are statistical machines that rarely go out of their way to mine the edges, then it seems reasonable to argue that LLMs probably can’t perform any sort of meaningful innovation. Yet we have to consider the premise on which this argument is built: that it’s ultimately about the distinction between semantic knowledge and episodic knowledge. Semantic knowledge is knowledge about the world, such as the fact that Paris is the capital of France. Episodic knowledge, on the other hand, is the knowledge of lived experiences - of what it feels like to walk through the city of love with your sweetheart on a sunny summer afternoon. LLMs excel at semantic knowledge but lack episodic knowledge, and what little episodic knowledge it holds will always be second-hand. Whether it matters that these episodic knowledge are second-hand is another issue altogether.

So … Can LLMs Innovate?

I’m of the opinion that it’d be hard for a raw LLM to zero-shot a ‘breakthrough’ innovation especially in the field of ‘open-world’ problems. Yet LLMs are also uniquely suited for such ‘open-world’, ill-defined problems because it has so much ‘dots’ to work with to explore different ways to define the problem. A tricky tension.

I do believe, however, that we could mimic the process of innovation (first-principle thinking and analogical reasoning) possibly using a multi-agent architecture, but the assumption here is that the open-world problems we’re working on don’t fall under the categories as those having rainbow squiggly skeletons. But do such problems exist? Aren’t most ‘open-world’ problems ‘open-world’ precisely because there are messy humans involved?

A possible way to reconcile this is to distinguish between the semantic and episodic knowledge nodes when constructing the structures of first principles. For such open-world problems, humans probably still need to be able to be the ones to provide these relevant episodic knowledge since, after all, these episodic knowledge would likely exist at the edge in the LLM’s training data (if at all) and wouldn’t be easily retrieved. In the case where analogical reasoning might come in handy, it’d also be useful if we could force the LLM to traverse a few conceptual hops away from the target domain to get as close to the edge as possible.

If we’re not dealing with rainbow squiggly skeletons but normal ones, then things are a lot simpler. The problem can still be complex as hell but what I want to explore is if we could have a way to represent its first principle structure in a way that facilitates subsequent reasoning. Knowledge graph is an area that I intuitively feel might be worth exploring here. Some possible applications I can think of now include ‘doing’ science and remixing existing patents.

Guess I’ll have to read up more on the nature of knowledge, knowledge graphs, and chew on the implications of LLM for user research and similar disciplines dabbling in the ‘thick description’ of humanity.

Time to sleep.

HX

14/07/2025

P.S. Thank you Lucy, Edward, and Jem for listening to the yap and offering constructive critiques, Susan for the insight and inspiration from the analogy (language is the powerhouse of the cell jk), and Huijun for being a great teammate for our analogical reasoning project!

Sources for the contextual nuances examples:

(1) and (2): The Knowledge Project with Shane Parrish Episode 141: Kunal Shah - Core Human Motivations